What does it mean to 'control documents'? And who needs a formal document control system to manage and optimis...

- Product

FIRST COLUMN TITLE

Lean eQMS

A Quality Management System right-sized to your needs

Document Management

Digitise your document control

Design Controls

Phase gate and document your entire design process

Extranet & Collaboration

Fast and secure information management

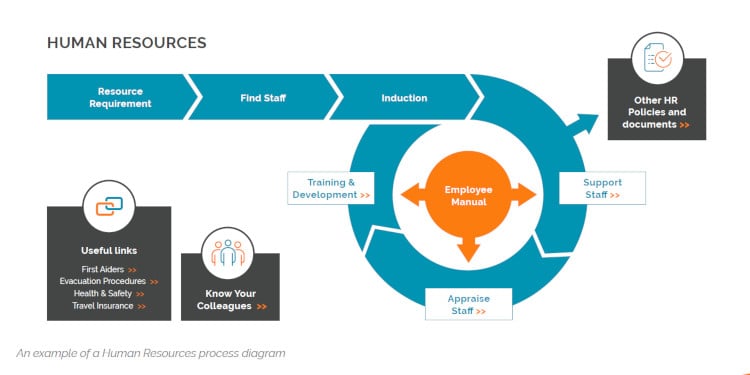

Business Management System

Ensure oversight and control with graphical business management

SECOND COLUMN TITLE

CAPAs & Non-conformance

Detect, correct and prevent quality failures

e-Signatures

Integrate FDA and ISO 13485 compliant e-signatures into your eQMS

Supplier Management

Monitor and manage supply chain quality

Training

Tracking and self-attestation made simple

Change Management

Change control when it matters most

Experience Cognidox

No obligation live

60-minute demo

- Industries

- Free Trial

- About

- Resources

- Pricing

%20(1).webp?width=133&height=76&name=ISO%20IEC%2027001%20(1)%20(1).webp)